Something is happening with Google’s index — or nothing is, depending on who you ask.

Since early April 2026, SEO professionals across LinkedIn, X, and SEO industry blogs have been comparing notes on the same observation: pages are disappearing from Google’s search index at rates they haven’t seen before. One mid-sized site’s Search Console graph circulating in these threads tells the story plainly — indexed pages slid from roughly 225,000 to 155,000 over twelve weeks, with the steepest cut happening after the March 2026 core update.

Google says nothing unusual is happening.

Both things are probably true at once. Here’s what the evidence actually supports, what’s still speculation, and what site owners should do about it before the next core update lands.

What the SEO community is reporting

The current discussion traces back to Pedro Dias, a former Googler, who posted on X asking whether others were seeing a higher rate of random deindexing since the start of April. The thread went sideways immediately — hundreds of replies confirming the pattern.

Search Engine Roundtable’s Barry Schwartz picked up the thread and published Google Search May Be Deindexing URLs At Higher Rates on April 30, 2026. He summarized the community responses and asked John Mueller, Google’s Search Advocate, for comment. Mueller’s reply was unequivocal:

“Some sites go up, some sites go down — I don’t see anything exceptional there.”

That’s not a denial that pages are being removed. It’s a denial that the removal rate is unusual. Those are different claims, and the distinction matters.

Schwartz’s piece sparked follow-up coverage. Optimixed’s recap emphasized the pattern of quality and freshness criteria tightening. Digital Phablet framed it as “rising rates” but flagged Mueller’s pushback. News.opositive.io went further, calling it a “panic” — though that piece reads more like industry reaction than primary reporting.

The LinkedIn comment threads following these posts surface a familiar handful of theories:

- Cory: freshness and quality components are purging stale content to reduce index size.

- Valentin Pletzer: some of this could be a Google Search Console reporting bug rather than actual deindexing.

- Alex Gramm: same pattern visible since December 2025 — the trend isn’t strictly an April phenomenon, it accelerated then.

- Stefano Galloni of K-Hub framed the underlying logic cleanly: “It takes much more value to stay in.”

- Zander Chrystall added the point that probably matters most: pages out of Google’s index are also out of the AI Overviews pool. Index health and AI visibility are converging into the same problem.

The historical context most coverage missed

This isn’t the first time Google has done a quiet, large-scale index purge. In May 2025, Indexing Insight published a study showing that across 2 million monitored URLs, over 25% were actively removed from Google’s index in a roughly 30-day window. Sites in the study lost between 15% and 75% of their indexed pages. The pattern: pages with low or zero search performance — minimal clicks, impressions near zero — were the ones purged.

That 2025 purge happened right before the June 2025 core update. The current 2026 wave is happening right after the March 2026 spam update and the March 2026 core update completed. Same playbook, different timing.

This is worth saying plainly: Google has done this before and will do it again. The “is something exceptional happening?” question Mueller answered is technically defensible. From inside Google, this looks like the system is working normally. From outside, watching an indexed page count drop 30% in twelve weeks doesn’t feel normal.

Three plausible causes — all consistent with the data

There’s no single confirmed explanation, but three theories fit the available evidence, and they’re not mutually exclusive.

1. Quality threshold raised after the March 2026 updates. The March 2026 spam update was completed in late March. The first core update of 2026 followed at the end of the month. Post-update index purges of low-engagement content are well-documented Google behavior — the May 2025 wave matched this pattern almost exactly. Pages that were marginal before the threshold raise become unindexable after it.

2. AI content saturation is forcing harder selectivity. This is the theory most current coverage gravitates toward, and it’s directionally correct but oversold. AI-generated content has flooded the open web faster than Google’s quality systems can keep pace. The infrastructure response — selective indexing rather than indexing everything by default — was inevitable. What’s happening now is the operationalization of that shift, not its trigger.

3. Reporting and measurement artifacts. Pletzer’s bug-report theory shouldn’t be dismissed. Google Search Console’s index coverage report is sampled, batched, and lagged. A perceived deindexing wave can be partly real removal and partly the reporting catching up to a state that was already true. This explains why some operators see dramatic drops while their actual organic traffic moves less than expected.

The honest answer is probably some weighted combination of all three. The relevant point for site owners isn’t which theory wins — the response is the same regardless.

What’s actually noise?

A few claims circulating in the LinkedIn and X threads need pushback.

The “21% overall deindexing rate across 16M webpages, 14% removed within 3 months” figure shows up in several promotional comments. Those numbers come from the May 2025 Indexing Insight study, not 2026 data. Citing them as current evidence is a reach, especially when the comment is attached to a pitch for an AI SEO product.

The “internet is running out of space” framing — that one circulates every time Google does anything visible. Google isn’t running out of storage. Selective indexing is a quality decision, not a capacity decision.

And the “Google is hiding something” conspiracy take should be ignored. Mueller’s statement is consistent with how Google actually operates: from inside the system, this looks like normal calibration. The information asymmetry is the story, not a cover-up.

What site owners should actually do?

The deindexing pattern points to specific failure modes that have a corresponding fix.

Audit which pages Google has dropped. If Crawled — currently not indexed and Discovered — currently not indexed reports in Google Search Console are growing, that’s the deindexing wave manifesting on the site. The pages most at risk are thin, duplicate, low-engagement, or stale.

Catch traffic before it dies on a 404. When a page falls out of the index and an old inbound link points to it, that link starts dropping users on a 404. The McCrossenSEO™ plugin’s 404 Monitor logs every 404 hit with a privacy-safe IP hash and bot filtering, then surfaces fuzzy-matched slug suggestions so a redirect can be created with one click. This is the difference between losing a backlink and rerouting it to the closest live page.

Strengthen structured data on the pages worth keeping. Schema markup is one of the signals Google uses to decide which pages stay indexed. McCrossenSEO™ ships Article, Product, FAQPage, Organization, and Breadcrumb schema with deduplication so themes and other plugins don’t double-emit. Out-of-stock products are excluded from sitemaps and noindexed automatically — a specific failure mode that’s been killing e-commerce sites in this wave.

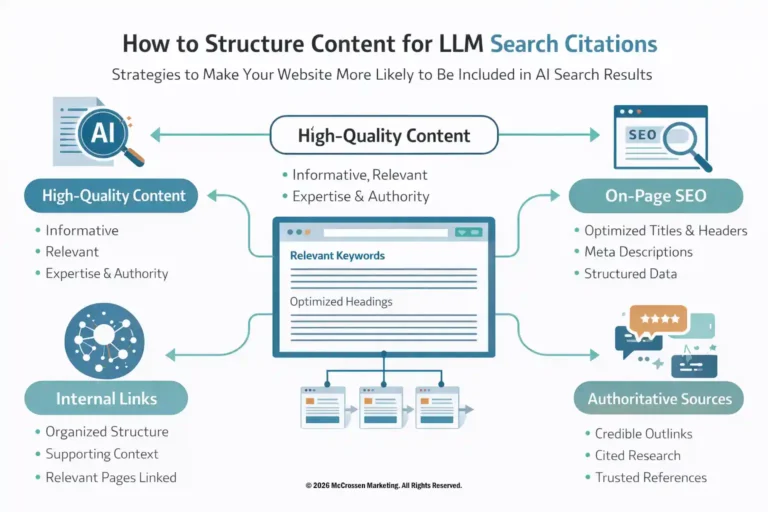

Publish llms.txt. AI Overviews and the LLM citation pool draw from a narrower set than the full web. McCrossenSEO™ generates and serves an llms.txt file describing the site’s content priorities for AI ingestion. This is becoming table stakes for sites that want to remain visible in AI answer surfaces, not just classic search.

Score for quality, not keyword density. The McCrossen Marketing Platform’s URL Optimization scoring engine is built around quality signals — content depth, structured data presence, URL architecture, internal linking — rather than the keyword-stuffing heuristics Google has been actively penalizing. Sites optimized against the platform’s recommendations are scoring against the same axes Google’s quality threshold cares about.

Monitor before the next update. The 2025–2026 pattern suggests a core update every quarter is the new baseline. Sites with healthy, well-structured, schema-rich content survive these waves. Sites with thin pages, missing structured data, and 404 graveyards lose pages they didn’t realize were borderline.

The bigger picture: index health is now AI visibility

Zander Chrystall’s point in the LinkedIn comments is the one most operators are still underweighting. Pages that fall out of Google’s index also fall out of the pool that AI Overviews, Google’s AI Mode, and the broader generative answer ecosystem draw from. The same is increasingly true for ChatGPT Search, Perplexity, and Bing’s AI features — they all start from indexed, retrievable web content.

This means the cost of deindexing is no longer “lost organic clicks.” It’s lost organic clicks plus lost AI citations plus lost AI Overview presence plus lost LLM answer inclusion. A page quietly dropped from Google’s index in 2026 is dropping out of four or five visibility surfaces simultaneously.

The strategic implication: the bar for what counts as “good enough to publish” is rising, and the cost of falling below it is compounding. Quality-first sites win across all surfaces. Thin-content sites lose across all surfaces. There’s no longer a middle.

What to take from this

The deindexing wave is real, even if Google’s official position is that it isn’t unusual. Both can be true. The pattern matches a documented Google behavior — post-update index purges of low-engagement content — and the trend is structural rather than a one-time event.

Sites that ship clean URLs, complete structured data, llms.txt for AI ingestion, working redirect pipelines, and content that earns its place in the index are positioned to weather the next wave. Sites that don’t will keep watching their Search Console graphs slope downward.

The McCrossenSEO™ plugin and the McCrossen Marketing Platform are built around exactly this premise — not as a reaction to the 2026 deindexing news, but because the architecture has been pointing this direction since v3 scoping started. The deindexing wave is the news. The response is already shipping.